search faculty.ai

Delivering frontier performance through applied AI

We help customers transform their business via bespoke AI consultancy and Frontier, the world’s first AI operating system.

OUR CUSTOMERS

WHAT WE OFFER

Frontier

Your platform for implementing,

controlling and auditing the performance

of AI across your organisation.

Connect your mission-critical apps to

simulate what’s going to happen, why,

and what to do about it.

WHAT WE OFFER

Applied AI Services

We help our clients navigate the AI transition. From strategy and roadmapping to the design, build and deployment of bespoke AI systems that deliver productivity and profitability.

For over 10 years we’ve delivered solutions for more than 250 customers, maximising their ROI from AI investments.

WHAT WE OFFER

Fellowship

The Fellowship programme gives STEM

PhD, Masters graduates and postdoctoral

researchers the training to move from

academia to a career in data science.

Hire from a pool of the very best data

science talent, hand-picked by

experts in the field.

Customer success stories

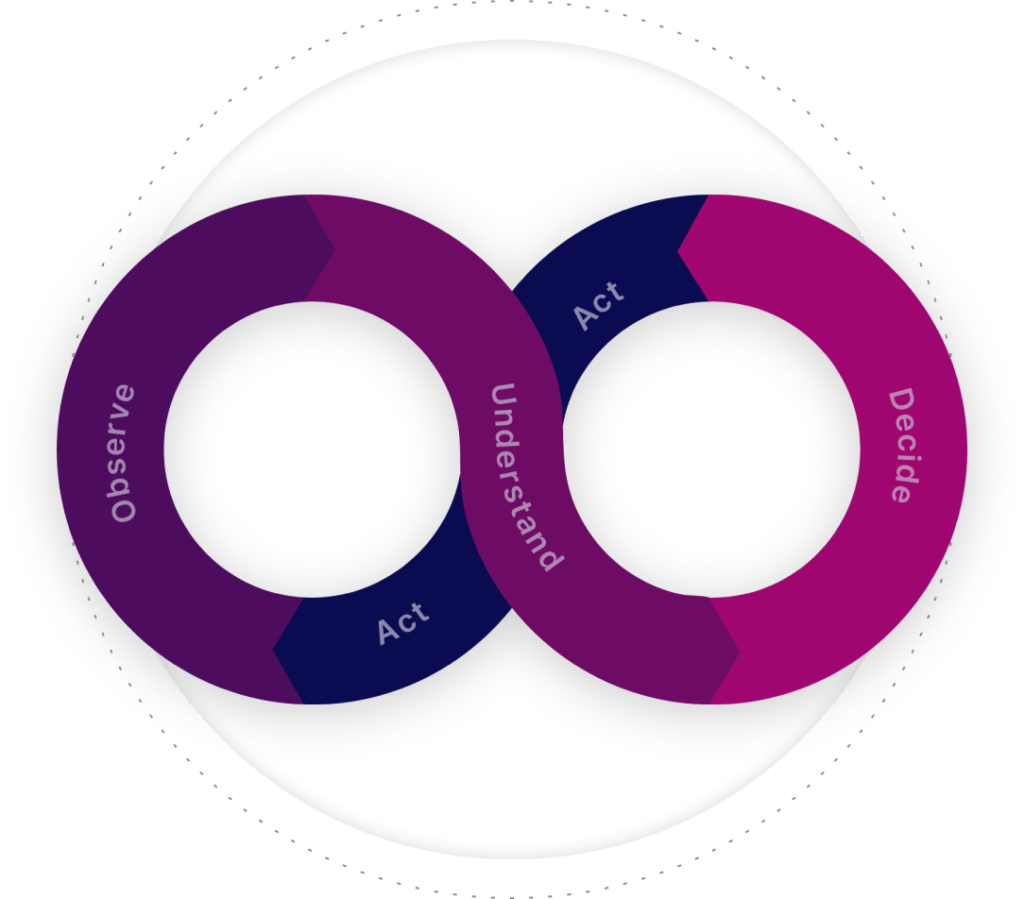

The Decision

Intelligence difference

Today, organisations have access to 50x more data than 10 years ago, but 67% of leaders say decision-making is harder than ever.

Decision Intelligence combines human expertise with machine learning technology to move organisations from decisions based on data to decisions based on understanding.

Understanding of the cause-and-effect relationships that drive your KPIs and the impact that your choices will have on them.

THE DECISION LOOP